|

I am a Lecturer with the School of Artificial Intelligence, Anhui University, Hefei, China. I received my Ph.D. degree in the Institute of Information Science, Beijing Jiaotong University (BJTU), China, 2021.

Google Scholar / Github / |

|

|

My research interests are computer vision, machine learning. Specifically, I am interested in using deep learning to solve the practical problems in the industry such as the limitation of insufficient resources and a trade-off between computation and accuracy. My research focus is mainly on: |

🚩 [2023.12] One paper is accepted by AAAI 2024. 🚩 [2023.07] One paper is accepted by ICCV 2023. 🚩 [2022.04] One paper is accepted by IJCAI 2022. |

|

You can find the full list on Google Scholar. |

|

|

|

|

|

|

|

|

|

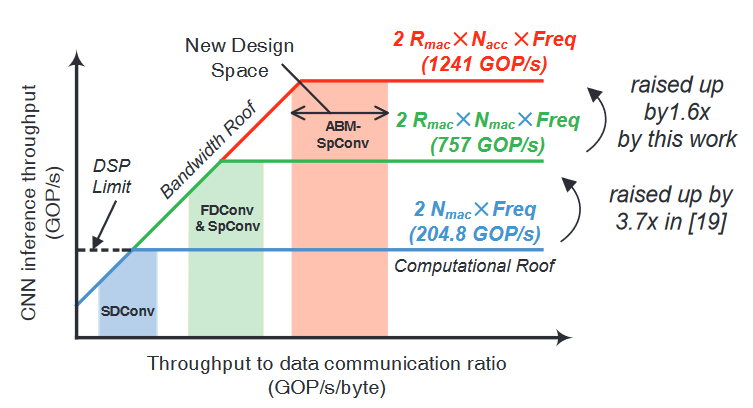

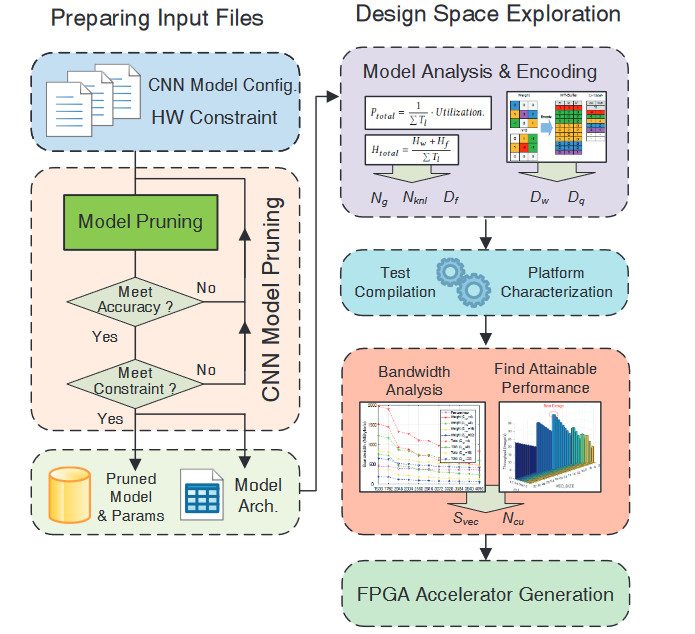

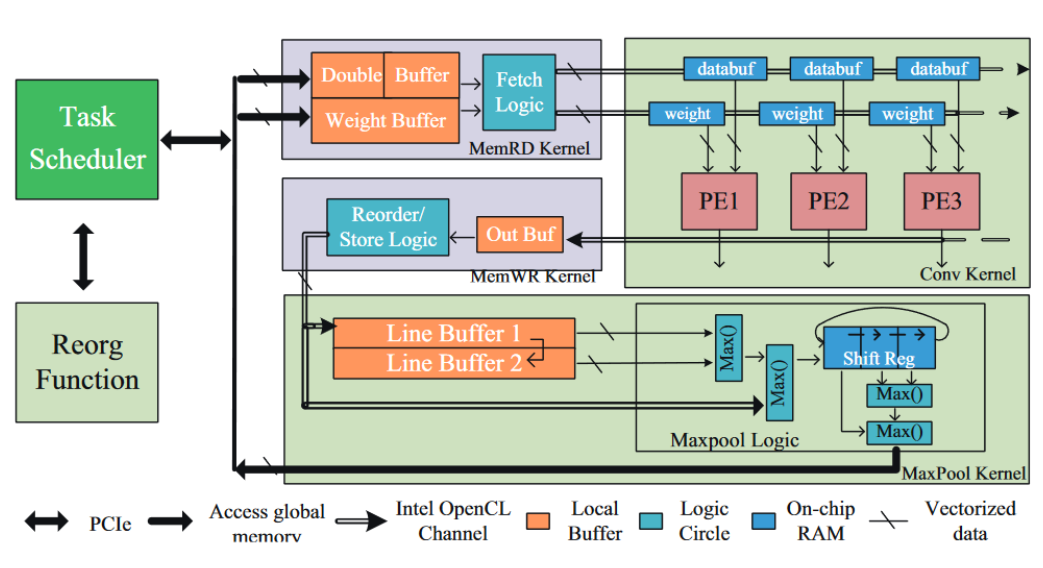

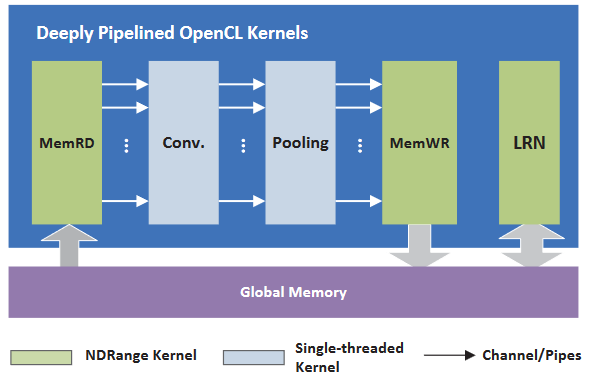

This work presented the first high-throughput FPGA accelerator design which targeted efficient implementation of sparse convolutional neural network. |

|

|

|

|